AI is here and it will change everything! OMG the sky is falling! Programmers are now obsolete. No, they are needed more than ever before. Large language models will destroy journalism, democracy, society. No, they will free us from drudgery and we will all be happier. Cancer will be solved because of AI. AI’s hallucinations will usher in a new era of disinformation.

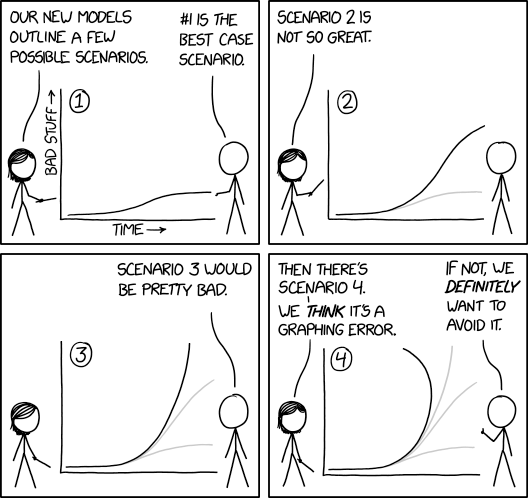

I just returned from a three month sabbatical spent mostly offline diving through history and I feel like I’ve returned to an alien planet full of serious utopian and dystopian thinking swirling simultaneously. I find myself nodding along because both the best case and worst case scenarios could happen. But also cringing because the passion behind these declarations has no room for nuance. Everything feels extreme and fully of binaries. I am truly astonished by the the deeply entrenched deterministic thinking that feels pervasive in these conversations.

Deterministic thinking is a blinkering force, the very opposite of rationality even though many people who espouse deterministic thinking believe themselves to be hyper rational. Deterministic thinking tends to seed polarization and distrust as people become entrenched in their vision of the future. Returning to the modern world, I’m finding myself frantically waving my hands in a desperate attempt to get those around me to take a deep breath (or maybe 100 of them). Given that few people can see my hand movements from my home office, I’m going to pretend like it’s 2004 and blog my thoughts in the hopes that this post might calm at least one person down. Or, maybe, if I’m lucky, it’ll be fed into an AI model and add a tiny bit of nuance.

What is deterministic thinking?

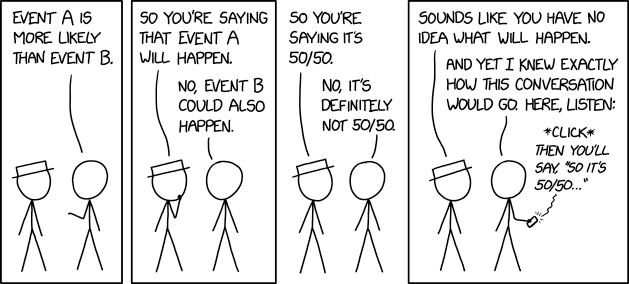

Simply put, determinism is “if x, then y.” It is the notion that if we do something (x), a particular outcome (y) is inevitable. Determinisms are not inherently positive or negative. It is just as deterministic to say “if we build social media, the world will be a more connected place” as it is to say “social media will destroy democracy.”

It is extraordinarily common for people who are excited about a particular technology to revert to deterministic thinking. And they’re often egged on to do so. Venture capitalists want to hear deterministic thinking in sales pitches. Same with the National Science Foundation. In many social science fields, these futuristic speech acts gets labeled in a pejorative manner as “technological determinism.” Inventors and corporations are often accused of being deterministic, which is a shorthand intended to dismiss their rhetoric as ahistoric and socially oblivious. (Academics who study technological determinism are often more nuanced in this scholarship than their blog posts and op-eds.)

Meanwhile, however, tech critics often fall into the same trap. Many professional critics (including academics, journalists, advocates) are incentivized to do so because such rhetoric appeals to funders and makes for fantastic opinion pieces. Their rhetoric is rarely labeled deterministic because that’s not the language of futurists. Rather, they are typically dismissed as being clueless about technology and anti-progress. They’re regularly not invited to “the party” (where the technology is being debated by those involved in creating it) because they’re seen as depressing.

Determinism’s ugly step-sibling is “solutionism.” Solutionism is the belief that x will be a solution not just to achieve y but to achieve all possibly y’s. Solutionists tend to be so enamored with x that they cannot engage with any criticism of x.

In a world where technologies are given power, authority, and funding, those with positive deterministic views (and solutionistic mindsets) often have more resources and power, leading to a mutually self-destructive polar vortex rich with righteousness that is anything but rational. This is often visible through shouting matches. Right now, the cacophony is overwhelming. And it’s breaking my brain.

Embracing Probabilistic Futures

The counter to determinism is not indeterminism. It is unsatisfying to throw our hands up in the air and say “any future is possible” because, well, that’s kinda bullshit. Some futures are more likely to occur than others. Some outcomes are more likely because of a particular technical intervention than others.

The key to understanding how technologies shape futures is to grapple holistically with how a disruption rearranges the landscape. One tool is probabilistic thinking. Given the initial context, the human fabric, and the arrangement of people and institutions, a disruption shifts the probabilities of different possible futures in different ways. Some futures become easier to obtain (the greasing of wheels) while some become harder (the addition of friction). This is what makes new technologies fascinating. They help open up and close off different possible futures.

To be clear, this way of thinking isn’t unique to technology. You can treat laws and regulations the same way, for example. Policies are often implemented with a vision of a new future; the reality of a new law is that things change but it’s not always as predictable as politicians might hope.

At both the macro and micro level, a wide range of interventions into a system rearrange possible futures. Bridges rearrange the social fabric of a city while chemotherapy rearranges the health futures of a patient. Neither determine the future. They just make some futures more likely and other futures less likely.

Context matters here. A bridge to nowhere (oh, thank you pork barrels) doesn’t have as much impact on futures as a bridge connecting two metropolises for the first time. Chemotherapy might be a general all-purpose intervention for cancer patients but the trade-offs between its potential upsides and downsides vary tremendously based on the particular cancer and the particular patient, and thus the probabilistic futures made possible are not consistent or always constructive.

When we’re dealing with medical interventions, we’re always living and breathing probabilistic futures. We hope that an intervention has a desired outcome but no responsible doctor is willing to be deterministic when treating a patient. Wise doctors think holistically about the patient and enroll them into probabilistic thinking to decide how to intervene in order to move towards desired futures. They take context into account and make probabilistically driven recommendations. (Shitty doctors can also be solutionistic in mindset, which sucks.)

Strangely, however, when it comes to a lot of other technologies, probabilistic thinking goes out the window. I find that especially odd when it comes to discussing artificial intelligence given that most AI models are the sheer manifestation of probabilistic thinking. There is no one future of LLMs (and many of those closest to these developments get this). And yet, hot damn is the rhetoric nutsoid.

The Blessings and Curses of Projectories

Even though deterministic thinking can be extraordinarily problematic, it does have value. Studying the scientists and engineers at NASA, Lisa Messeri an Janet Vertesi describe how those who embark on space missions regularly manifest what they call “projectories.” In other words, they project what they’re doing now and what they’re working on into the future in order to create for themselves a deterministic-inflected roadplan. Within scientific communities, Messeri and Vertesi argue that projectories serve a very important function. They help teams come together collaboratively to achieve majestic accomplishments. At the same time, this serves as a cognitive buffer to mitigate against uncertainty and resource instability. Those of us on the outside might reinterpret this as the power of dreaming and hoping mixed with outright naiveté.

We can dismiss these scientists’ projectories (and the projectories of the institution) as delusional, but a lot of creativity comes from delusional thinking. This is why I often have a larger tolerance for projectories and deterministic fantasy-making that many social critics.

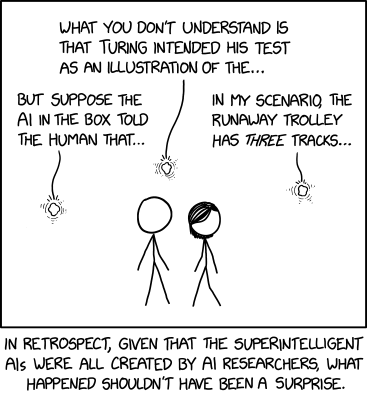

Where things get dicy is where delusional thinking is left unchecked. Guard rails are important. NASA has a lot of guardrails, starting with resource constraints and political pressure. But one of the reasons why the projectories of major AI companies is prompting intense backlash is because there are fewer other types of checks within these systems. (And it’s utterly fascinating to watch those deeply involved in these systems beg for regulation from a seemingly sincere place.)

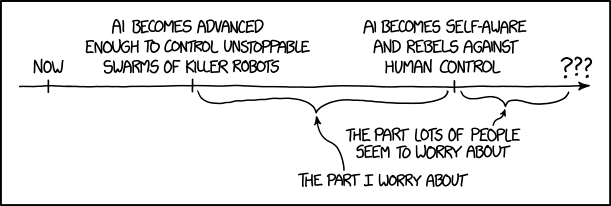

Right now, the check that is most broadly accessible is a reputational check. And so, in a fascinating twist of fate, those who are trying to push back against the development of AI systems have reverted to the same act of generating projectories that are daaaaaark and dystopic. (And to be clear, many of those who are most engaged in these alternative projectories are themselves AI researchers and developers.) The scramble for thought leadership and control of the narrative is overwhelming.

Projectories have power. Power for those who are trying to invent new futures. Power for those who are trying to mobilize action to prevent certain futures. And power for those who are trying to position themselves as brokers, thought leaders, controllers of future narratives in this moment of destabilization. But the downside to these projectories is that they can also veer way off the railroad tracks into the absurd. And when the political, social, and economic stakes are high, they can produce a frenzy that has externalities that go well beyond the technology itself. That is precisely what we’re seeing right now.

A Different Path Forward

Rather than doubling down on deterministic thinking by creating projectories as guiding lights (or demons), I find it far more personally satisfying to see projected futures as something to interrogate. That shouldn’t be surprising since I’m a researcher and there’s nothing more enticing to a social scientist than asking questions about how a particular intervention might rearrange the social order.

But I also get the urge to grab the moment by the bull’s horns and try desperately to shape it. This is the fascinating thing about a disruption like what we’re seeing with AI technologies. It rearranges power, networks, and institutions. And we’re watching a scramble by individuals and organizations to obtain power in this insanity. In these moments, there is little space for deeply reflexive thinking, for nuanced analysis, which is especially unfortunate because that’s precisely what we need right now. Not to predict futures (or to prevent them) but to build the frameworks of resilience.

Consider the best case scenario of a doctor and patient navigating a medical intervention. In such a situation, there are mechanisms for data collection (ranging from bio-specimens to verbal reflections) and an iterative process for choosing how to proceed. Each medical treatment is viewed as an intervention that needs to be considered with future steps taken based on information gleaned in the process.

How do we do this same activity at scale? How do create significant structures to understand and evaluate the transformations that unfold as they unfold and feed those back into the development cycle? How do we build assessment protocols for evaluating new AI models?

Consider the rhetoric that surrounds how AI will disrupt XYZ industry. Some researchers, executives, and workers who know those industries are shifting their energies to ask these questions. But we don’t have mechanisms in place to really “see” let alone evaluate the disruptions. Why not? If pundits are predicting such disruptions, shouldn’t we be building the mechanisms to see if those futures are unfolding the way that determinists think they will?

Those who are building new AI systems are talking extensively about the potential for disruption (with both enthusiasm and fear) but I see very little scaffolding outside of the sciences to even reflect on how these disruptions will unfold. I know that researchers are scrambling to jump in (often with few dedicated resources) and organizational leaders are convening meetings to discuss their postures vis-a-vis these new systems, but I’m surprised by how little scaffolding there is to ensure that there are evaluations along the way.

And that brings me back to a question about all of these disruptions. Is the goal to disrupt and then let a free-for-all happen because that’s how change should occur? Or is the goal to disrupt to actually drive towards futures that people imagine? Cuz right now, even with the rhetoric of the latter, the former seems more at play.

Deterministic rhetoric lacks nuance. It also lacks an appreciation for human agency or a recognition that disruptions are situated within complex ecosystems that will shapeshift along the way. But what bothers me most about the deterministic framing that is hanging over all things AI right now is that it’s closing out opportunities for deeper situated thinking about the transformations that might unfold over the next few years.

How can we push past our current love affair with determinism so that we can have a more nuanced, thoughtful, reflexive account of technology and society?

I, for one have no clue what’s coming down the pike. But rather than taking an optimistic or a pessimistic stance, I want to start with curiosity. I’m hoping that others will too.